OK - a really geeky little tutorial, this one. If you've never felt the urge to flush your DNS cache, then don't worry, that's quite normal, many people live long and happy lives without ever doing so, and you should feel free to ignore this post and go about your other business.

A little bit of background...

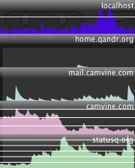

DNS lookups, as many of my readers will be aware, are cached. The whole DNS system would crumble and fall if, whenever your PC needed to look up statusq.org, say, it had to go back to the domain's name server to discover that the name corresponded to the IP address 74.55.156.82. It would need to do before it could even start to get anything from the server, so every connection would also be painfully slow. To avoid this, the information, once you've asked for it the first time, is probably cached by your browser, and your machine, and, if you're at work, your company's DNS server, and their ISP's DNS server... and it's only if none of those know the answer that it will go back to the statusq.org domain's official name server - GoDaddy, in this case - to find out what's what.

Of course, all machines need to do that from time to time, anyway, because the information may change and their copy may be out of date. Each entry in the DNS system therefore can be given a TTL - a 'Time To Live' - which is guidance on how frequently the cached information should be flushed away and re-fetched from the source.

On Godaddy, this defaults to one hour - really rather a short period, and since they're the largest DNS registrar, this probably causes a lot of unnecessary traffic on the net as a whole. If you're confident that your server is going to stay on the same IP address for a good long time, you should set your TTLs to something more substantial - perhaps a day, or even a week. This will help to distribute the load on the network, reduce the likelihood of failed connections, and, on average, speed up interactions with your server. The reason people don't regularly set their TTL to something long is that, when you

do need to change the mapping, perhaps because your server has died and you've had to move to a new machine, the old values may hang around in everybody's caches for quite a while, and that can be a nuisance.

It's useful to think about this when making DNS changes, because you, at least, will want to check fairly swiftly that the new values work OK. There's nothing worse than making a typo in the IP address of an entry with a long TTL, and having all of your customers going to somebody else's site for a week.

So, if you know you're going to be making changes in the near future, shorten the TTL on your entries a few days in advance. Machines picking up the old value will then know to treat it as more temporary. You can lengthen the TTLs again once you know everything is happy.

Secondly, just before you make the change, try to avoid using the old address, for example by pointing your browser at it. This goes for new domains, too - the domain provider will probably set the DNS entry to point at some temporary page initially - and if you try out your shiny new domain name immediately, you'll then have to wait a couple of hours before you can access your real server that way. Make the DNS change immediately, before your machine has ever looked it up and so put it in it cache and any intervening ones.

Finally, once you've made a change, you may be able to encourage your machines to use the new value more quickly by flushing their local caches. This won't help so much if they are retrieving it via an ISP's caching proxy, for example, but it's worth a try.

Here's how you can use the command line to flush the cache on a few different platforms. Please feel free to add any others in the comments:

On recent versions of Mac OS X:

sudo dscacheutil -flushcache

On older versions of OS X:

sudo lookupd -flushcache

On Windows:

ipconfig /flushdns

On Linux, if your machine is running the ncsd daemon:

sudo /etc/rc.d/init.d/nscd restart

If you're actually running a DNS server, for example for your organisation's local network:

On Linux running bind9:

rndc flush

On Linux running bind8:

ndc flush

On Ubuntu/Debian running named:

/etc/init.d/named restart

On Godaddy, this defaults to one hour - really rather a short period, and since they're the largest DNS registrar, this probably causes a lot of unnecessary traffic on the net as a whole. If you're confident that your server is going to stay on the same IP address for a good long time, you should set your TTLs to something more substantial - perhaps a day, or even a week. This will help to distribute the load on the network, reduce the likelihood of failed connections, and, on average, speed up interactions with your server. The reason people don't regularly set their TTL to something long is that, when you do need to change the mapping, perhaps because your server has died and you've had to move to a new machine, the old values may hang around in everybody's caches for quite a while, and that can be a nuisance.

On Godaddy, this defaults to one hour - really rather a short period, and since they're the largest DNS registrar, this probably causes a lot of unnecessary traffic on the net as a whole. If you're confident that your server is going to stay on the same IP address for a good long time, you should set your TTLs to something more substantial - perhaps a day, or even a week. This will help to distribute the load on the network, reduce the likelihood of failed connections, and, on average, speed up interactions with your server. The reason people don't regularly set their TTL to something long is that, when you do need to change the mapping, perhaps because your server has died and you've had to move to a new machine, the old values may hang around in everybody's caches for quite a while, and that can be a nuisance.